1. Export the metric: First, configure your Pod to send (export) its metrics either to Cloud Monitoring, Google Managed Prometheus or whatever monitoring system you use.

2. Configure the “middleman”: Then, install and manage either the custom-metrics-stackdriver-adapter or prometheus-adapter in your cluster to act as a translator between Cloud Monitoring and the HPA. Configuring these adapters isn’t always straightforward, and maintaining them can be complex and error-prone.

3. Navigate the IAM labyrinth: This is often the biggest hurdle. To allow the adapter to read the metrics you just exported, you must:

◦ Enable Workload Identity Federation on your cluster.

◦ Create a Google Cloud IAM Service Account.

◦ Create a Kubernetes Service Account and annotate it.

◦ Bind the two accounts together using an IAM policy binding.

◦ Grant specific IAM roles.

4. Manage operational risk: Once configured, your autoscaling logic now depends on your observability stack being available. If metric ingestion lags or the adapter fails, your scaling breaks.

In other words, all of a sudden your production environment hinges on your monitoring. While monitoring systems are part of your critical infrastructure and an important part of the production environment, production can generally continue even if they fail. In this configuration though, the autoscaling mechanism is now dependent on your monitoring system. If the monitoring system readout or the system itself fails, the workload can’t autoscale anymore. This creates an inherent operational risk, where scaling logic is coupled to the availability of an external observability stack. According to most IT best practices, this kind of circular dependency is not a recommended configuration, as it complicates troubleshooting and reduces a service’s overall resilience.

Furthermore, Kubernetes users often adopt third-party solutions because configuring HPA to scale on custom metrics has historically been so clunky, cumbersome, and error-prone. Managing and syncing third-party solutions and their complex setups can be difficult to align with GKE updates or upgrade cycles.

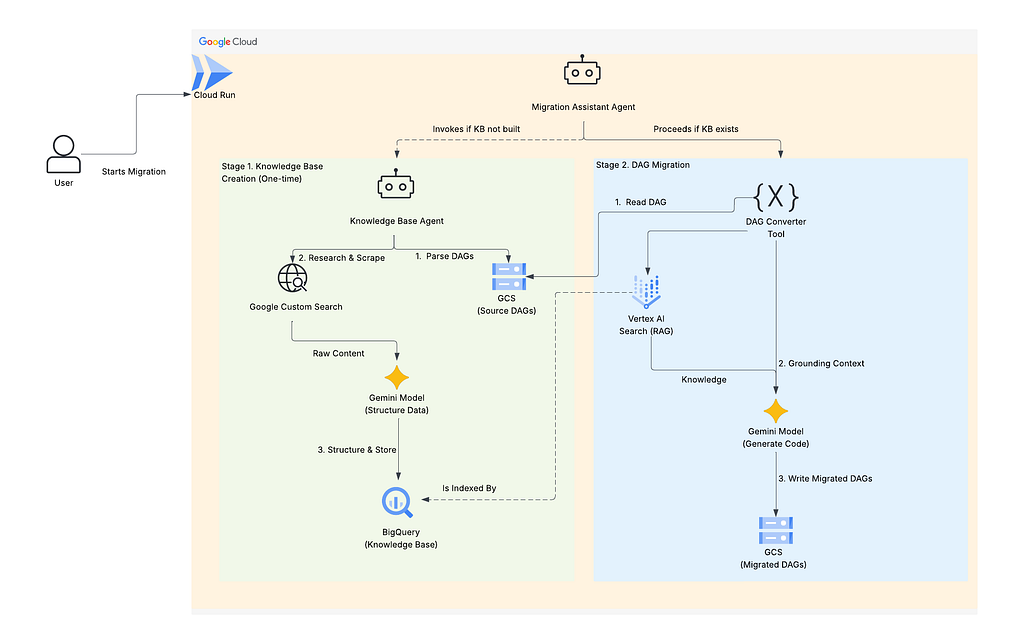

Agentless, native autoscaling

With native support for custom metrics in GKE, we’ve removed the middleman and fundamentally redesigned the autoscaling flow. Scaling workloads on real-time custom metrics is now as simple as scaling on memory or CPU, with no complex and circular dependencies on monitoring systems, adapters, service accounts, or IAM roles.

No agents, no adapters, no complex IAM: Custom metrics are now directly sourced from your Pods and delivered to the HPA. With this agentless architecture, you no longer need to maintain a custom metrics adapter or manage complex Workload Identity bindings.

Native support for custom metrics:

Source Credit: https://cloud.google.com/blog/products/containers-kubernetes/gke-now-supports-custom-metrics-natively/