Welcome to the February 16–28, 2026 edition of Google Cloud Platform Technology Nuggets. The nuggets are also available on YouTube.

Here are a couple of lovely infographics summarizing this edition of the newsletter, courtesy NotebookLM.

AI and Machine Learning

Looking to get a quick summary of everything that Google Cloud announced in AI this month? Bookmark this page. Key updates include preview of Gemini 3.1 Pro for reasoning tasks in Vertex AI and Google Antigravity, the general availability of Claude 4.6 Opus and Sonnet, new image and video capabilities through Nano Banana 2 and updates to Veo 3.1.

Google Cloud has released Gemini 3.1 Pro, a model designed for complex problem-solving and reasoning tasks. It is currently available in preview through Vertex AI, Gemini Enterprise, and several developer tools including the Gemini CLI and Android Studio. Technical evaluations indicate a 15% performance improvement over previous versions, specifically in areas such as 3D transformations, rotation order logic, and grounded reasoning using both tabular and unstructured data. The model is also more efficient, as it generates fewer output tokens while maintaining reliability. Check out the blog post.

Nano Banana 2 is now available in preview via the Gemini API in Vertex AI. The model supports high-resolution upscaling to 2K and 4K, accurate text rendering directly into images, and native support for multiple aspect ratios including 16:9 and 9:16. For more details, check out the blog post.

Provisioned Throughput on Vertex AI, which provides reserved resources for capacity and performance now includes support for Anthropic models in private preview and open-source models like Llama 4, Qwen3, GLM-4.7, and DeepSeek-OCR. For more details, check out the blog post.

Data Analytics

Looking to get a summary of key announcement in the world of Google Cloud databases, analytics and tools, check out “Whats new with Google Data Cloud”. Key announcements in this period include managed Model Context Protocol (MCP) support for databases like AlloyDB, Spanner, and Cloud SQL to connect AI models with operational data. BigQuery now features a Conversational Analytics API and built-in agent capabilities for natural language data exploration and visualization.

Google Cloud has launched managed Model Context Protocol (MCP) servers for several database services, including AlloyDB, Spanner, Cloud SQL, Firestore, and Bigtable. These servers allow AI agents to connect to operational data using a standard interface without requiring users to deploy or manage additional infrastructure. Additionally, a new Developer Knowledge MCP server provides an API to connect IDEs directly to Google’s technical documentation. Security is managed through Identity and Access Management (IAM) for authentication and Cloud Audit Logs for tracking agent interactions. Because these servers use an open standard, they are compatible with both Gemini and third-party AI clients. For more details, check out the blog post.

BigQuery has introduced autonomous embedding generation to simplify how developers manage data for AI applications and Retrieval-Augmented Generation (RAG). Previously, users had to manually build and maintain pipelines to detect data changes, call embedding models, and synchronize vectors. This new feature allows you to define an embedding column directly in a table using SQL, which BigQuery then populates and updates automatically in the background as source data changes. The system integrates with Vertex AI models and features a new AI.SEARCH function, which lets you perform vector searches without manually specifying the underlying embedding model or configuration. Check out the post.

We’ve touched upon Conversational Analytics in BigQuery before. In this edition, there is the Conversational Analytics API allows developers to build data agents that interface with BigQuery using natural language. The blog post outlines how these agents can manage conversation history and automatically generate real-time data visualizations, such as charts and tables, to make complex datasets more accessible to non-technical users. Key use cases highlighted include self-service operations by users in SaaS products and dynamic reporting, where users can ask follow up questions of initial reports that are presented to them.

The Spanner columnar engine is now in preview, enabling users to serve Apache Iceberg lakehouse data with low latency. This engine introduces a specialized columnar storage format alongside traditional row-based storage to accelerate analytical queries on live operational data. It utilizes vectorized execution to process data batches efficiently and includes an automatic query handler that redirects large-scan analytical queries to the columnar representation. Check out the post.

Security and Identity

The first Cloud CISO Perspectives for February 2026 is out. In the perspective, the Google Threat Intelligence Group released a report detailing how threat actors are currently utilizing AI across five specific categories. These include model extraction attacks where adversaries use knowledge distillation to steal IP and training data, and AI-augmented operations where government-backed groups use LLMs for reconnaissance, social engineering, and coding.

The second Cloud CISO Perspectives for February 2026 is out too. The edition highlights how Google is tackling key security topics. As an example, to counter AI-driven threats like automated malware and data poisoning, Google is deploying agentic security operations to automate threat hunting and alert triaging.

Developers & Practitioners

If you have been spending time building out agents, one of the key requirements or shall we say expectations from the users is that agents need to be reliable. That requires a good application of agent evaluation techniques. No longer subjective “vibe checks” and the article highlights that you need to shift to a disciplined continuous evaluation (CE) framework for building reliable AI agents. Check out the post that highlights not just the approach but patterns that you can use today with code.

If you are looking to run chatbots on Google Cloud and integrating long-term memory into them via a variety of databases, then check out this blog post that outlines this strategy. It looks at using Memorystore for Redis for shot-term context, Cloud Bigtable for a mid-term system of reord and long-term architval and analytical processing are handled by BigQuery. That’s not all, unstructured data like images and audio are stored in Cloud Storage along with using Pub/Sub and Dataflow to move data across layers.

Looking to move from prototyping applications in Google AI Studio to deploying the applications on Google Cloud? This article provides a guide for developers to transition from prototyping in Google AI Studio to scaling applications on Google Cloud Platform. It explains how to set up a Google Cloud Project to access advanced models like Gemini 3 Pro Image and utilize infrastructure for hosting, storage, and global deployment. The post details the components of the Google Cloud Free Tier, which includes $300 in credits and ongoing monthly usage limits for services such as Cloud Run, Compute Engine, and BigQuery.

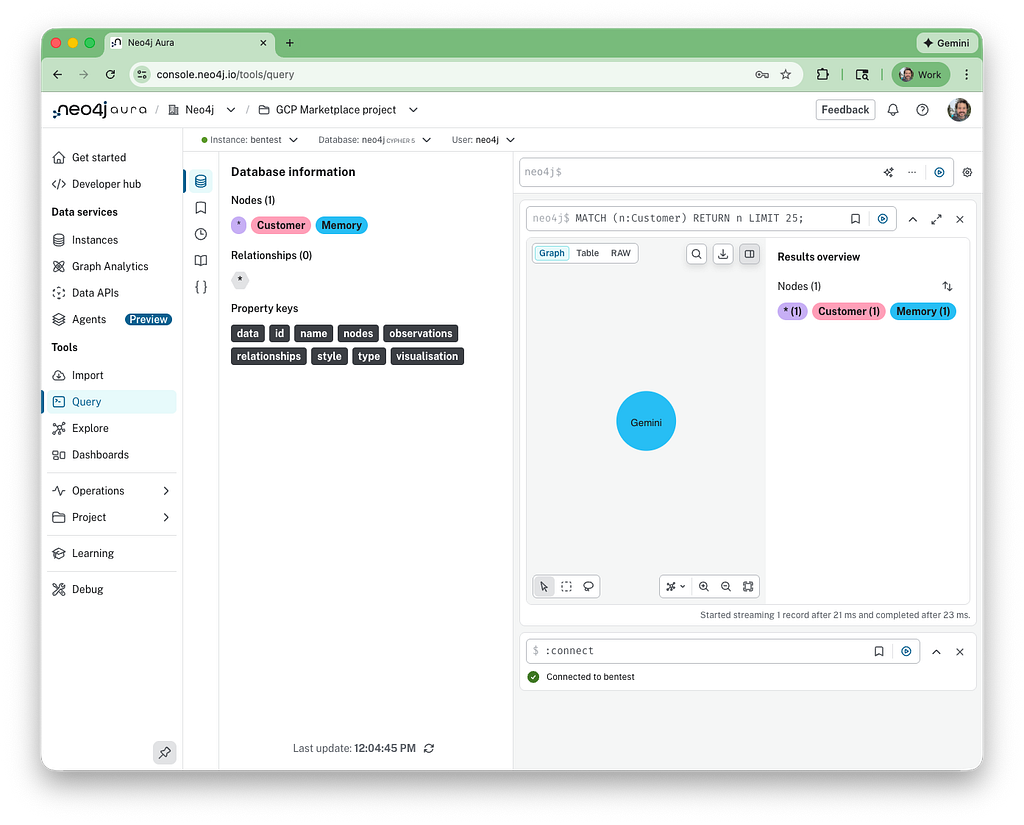

If you have been using Neo4j on Google Cloud, you can potentially look at the Neo4j Gemini CLI extension, that integrates graph database capabilities into a terminal environment using the Model Context Protocol (MCP). The extension allows for managing Neo4j Aura infrastructure, convert natural language into Cypher queries, performing interactive data modeling, and use the graph as a persistent memory store for agentic workflows. Usage of the extension requires a subscription to Neo4j Aura via the Google Cloud Marketplace, configure specific environment variables for authentication, and install the extension through the Gemini CLI. Check out the blog post for more details.

Infrastructure

Google Cloud is expanding its global network infrastructure through the America-India Connect initiative, anchored by a $15 billion investment in India. This project establishes a new international subsea gateway in Visakhapatnam (Vizag) and adds three subsea paths connecting India to Singapore, South Africa, and Australia. Check out the announcement.

Learning

Google has published a series of key technical guides for developing production-ready AI agents:

- Introduction to Agents

- Tools and Interoperability with MCP

- Context Engineering

- Agent Quality

- Prototype to Production

Check out the post for an overview of these guides and links to download them.

Eventarc Advanced is a serverless eventing platform designed to manage complex, event-driven architectures by separating governance from integration logic. It introduces a two-layer model: a bus for platform administrators to enforce centralized security policies, such as Identity and Access Management (IAM) and VPC Service Controls , and a pipeline for developers to handle distributed logic. Check out this post to learn more about this service and how to get started.

Write for Google Cloud Medium publication

If you would like to share your Google Cloud expertise with your fellow practitioners, consider becoming an author for Google Cloud Medium publication. Reach out to me via comments and/or fill out this form and I’ll be happy to add you as a writer.

Stay in Touch

Have questions, comments, or other feedback on this newsletter? Please send Feedback.

If any of your peers are interested in receiving this newsletter, send them the Subscribe link.

Google Cloud Platform Technology Nuggets — February 16–28, 2026 was originally published in Google Cloud – Community on Medium, where people are continuing the conversation by highlighting and responding to this story.

Source Credit: https://medium.com/google-cloud/google-cloud-platform-technology-nuggets-february-16-28-2026-5f63558b2cf8?source=rss—-e52cf94d98af—4