Building an Enterprise-Grade Multimodal RAG Platform on Google Vertex AI |A Zero-to-Production Guide

Welcome to the Enterprise Multimodal RAG Tutorial Series.

If you have ever built a Retrieval-Augmented Generation (RAG) prototype on your laptop, you know it feels like magic. But the moment you try to scale that prototype for a real enterprise, it usually falls apart.

Why? Because enterprise knowledge isn’t just flat text. It is a chaotic mix of scattered SharePoint folders, 50-page complex legal PDFs, heavy financial spreadsheets, and 45-minute Zoom recordings.

Standard vector databases treat all this data as plain text, destroying tables, losing visual context, and hallucinating answers. Furthermore, standard RAG tutorials often ignore enterprise realities like Role-Based Access Control (RBAC), network security, and cold-start latency.

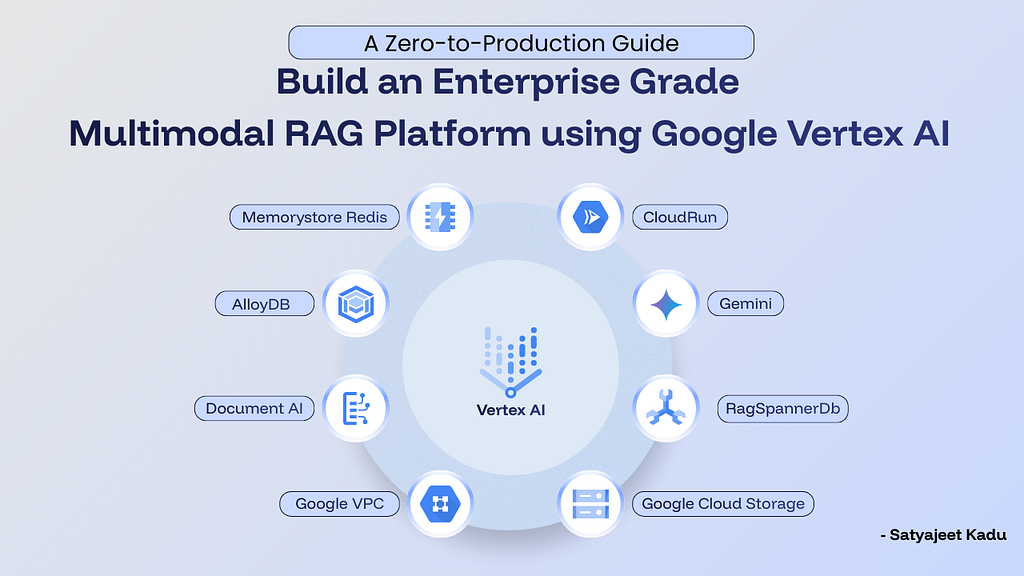

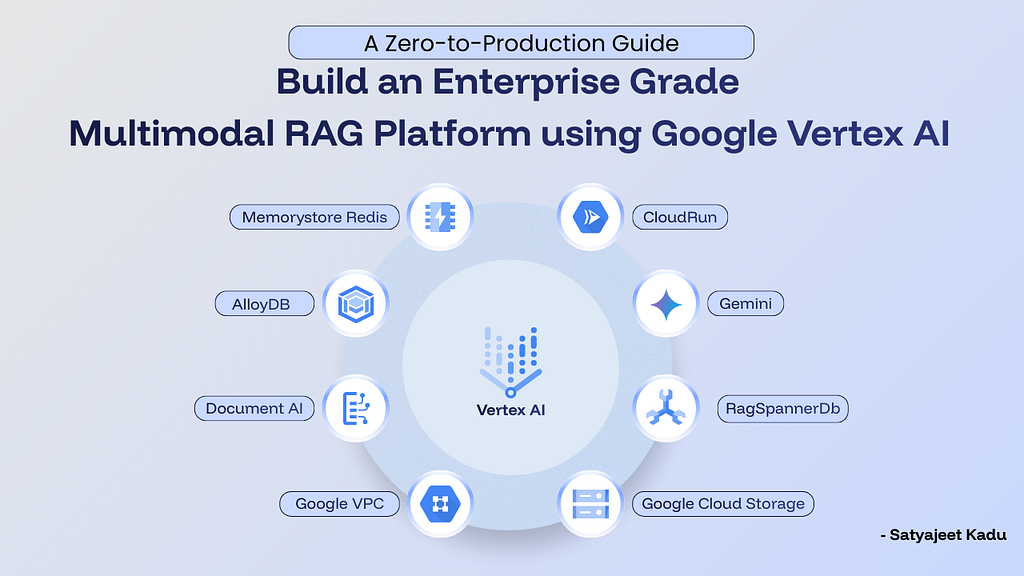

I set out to build a unified platform to solve this — a secure “Dual Brain” architecture utilizing Google Vertex AI RAG Engine, AlloyDB, Gemini 2.5, Cloud Run, Memorystore for Redis and more!

This series is the complete, step-by-step blueprint of that journey. We are going to build an Agentic RAG system that intelligently routes data, understands images and video, respects strict corporate permissions, and runs in a highly secure Virtual Private Cloud (VPC).

📚 The Tutorial Series Breakdown

Whether you want to read it end-to-end or jump to a specific architectural layer, here is what the series covers:

- Part 1: The Architecture & “Dual Brain” Strategy — We tear down the monolithic vector database approach and design a flexible system. You will learn the high-level flow of the Ingestion (Smart Router) and Retrieval (Neural Router) pipelines.

- Part 2: The Data Layer & Infrastructure — We stop talking and start building. We provision a secure, zero-public-exposure infrastructure using Google Cloud Storage, Vertex AI RagManagedDb, AlloyDB with pgvector, and a Redis Semantic Cache bridged by a Serverless VPC Connector.

- Part 3: The Ingestion Pipeline — We build a “Smart Router” that inspects incoming files. It routes complex PDFs to Document AI, 45-minute videos to Gemini 2.5 Pro for visual/audio extraction, and structured CSVs directly to AlloyDB for autonomous vector embeddings.

- Part 4: The Retrieval Pipeline — The moment of truth. We implement a Semantic Cache, an AI Sentinel for prompt-injection security, strict RBAC to prevent data leakage, and a Federated Neural Router that queries multiple databases concurrently and re-ranks the results.

- Part 5: Into Production (Agentic RAG) — We evolve from static queries to an autonomous Agent using Gemini Function Calling. Finally, we lock the system down for production using Google Secret Manager, Gunicorn tuning for heavy AI workloads, and Cloud Build CI/CD pipelines to GKE.

🛠️ Prerequisites & Getting Started

To follow along and build this in your own environment, you will need:

- A Google Cloud Project with billing enabled.

- Google Cloud CLI installed and configured on your machine.

- Basic familiarity with Python (FastAPI) and Docker.

By the end of this series, you won’t just have a chatbot. You will have a highly scalable, secure, and autonomous enterprise knowledge platform.

Grab a coffee, spin up your GCP terminal, and let’s get building!

Building an Enterprise-Grade Multimodal RAG Platform on Google Vertex AI |A Zero-to-Production… was originally published in Google Cloud – Community on Medium, where people are continuing the conversation by highlighting and responding to this story.

Source Credit: https://medium.com/google-cloud/building-an-enterprise-grade-multimodal-rag-platform-on-google-vertex-ai-a-zero-to-production-e3e4b7c53056?source=rss—-e52cf94d98af—4