Recently, I led the development of an MVP (Minimum Viable Product) focused on building AI agents for automated code refactoring.

The goal was to explore how far we could push Gemini 2.5 Pro’s analytical capabilities in a real, industrial-grade environment — beyond simple code formatting or syntax conversion.

This was not a “convert my code to X” project.

It required deep prompt engineering, multiple iterations, and a thorough understanding of how agent-based systems reason, manage context, and execute instructions at scale.

Along the way, I often used the LLM itself as a debugging partner — asking questions like:

- “Why am I seeing this behavior?”

- “What is wrong with this prompt?”

- “Why is my agent not doing what I instructed?”

Those conversations became part of the learning process.

The Real-World Challenge: Legacy-to-Cloud Dependencies

One of the main complexities of this project came from working with a partially migrated legacy database.

Only parts of the system had been moved to cloud infrastructure, and many object schemas were incomplete.

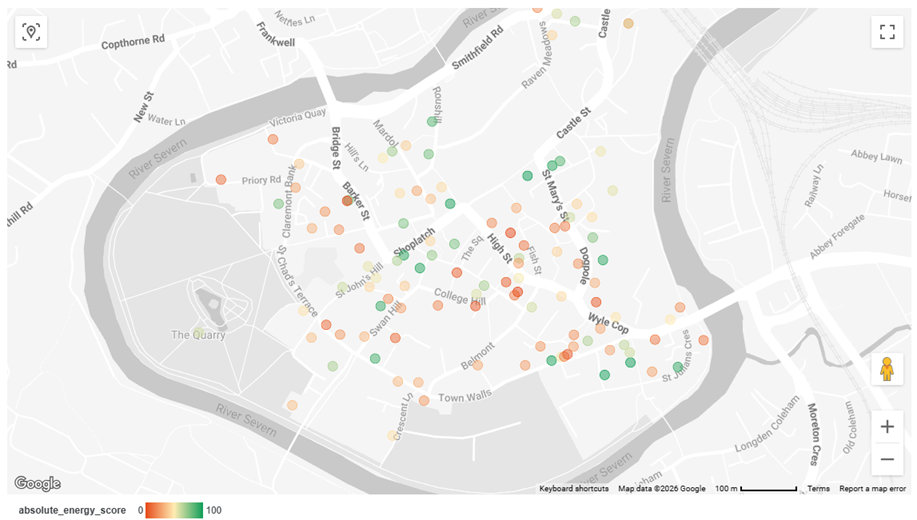

The diagram below illustrates a simplified version of the dependency structure.

In practice, this meant:

- In the legacy environment, Layer-3 objects are built from Layer-1 and Layer-2.

- In the cloud environment, only Layer-1 schemas exist.

- Layer-2 and Layer-4 logic is missing or incomplete.

- Layer-5 objects, however, still depend on all previous layers.

My goal was to reliably generate Layer-5 outputs while keeping the transformation logic independent of unstable or incomplete intermediate layers.

In other words, I wanted Layer-5 scripts that worked even when Layer-2 and Layer-4 were unavailable.

Inlining Missing Logic

To achieve this, I chose to inline the logic of Layer-2 and Layer-4 wherever needed directly into the Layer-5 transformation scripts.

This meant:

- Reconstructing missing dependencies

- Embedding intermediate logic

- Preserving correctness

- Avoiding tight coupling with incomplete layers

As a result, this became far more complex than a simple schema migration or refactoring exercise.

I had to train Gemini not only to refactor code, but also to understand:

- Hierarchical dependencies

- Object derivation paths

- Missing-layer reconstruction

- Context-aware inlining

This required carefully designed prompts that guided the model to reason structurally, not just syntactically.

Key Lessons from Building Agent-Based Systems

1. Keep Agent Instructions Simple and Direct

With ADK (Agent Developer Kit) agents, clarity is critical. Long, multi-layered instructions tend to confuse agents.

Interestingly:

- Gemini’s content generation endpoint handled complex prompts well.

- ADK agents often struggled with the same instructions. Even when token limits were not exceeded, overly verbose prompts could cause agents to stall or fail.

Lesson:

Short, focused, and unambiguous instructions work best.

2. Manage Context Growth Carefully

ADK agents accumulate context from:

- Tool outputs

- Intermediate reasoning

- Other agents’ responses

If these outputs are large, context grows rapidly.

Over time, this led to:

- Context exhaustion

- Resource limits

- Frequent 429 errors “Resource exhausted”

To mitigate this, I minimized all intermediate outputs.

Lesson:

Return only what is necessary.

3. Eliminate Contradictions in Prompts

Conflicting instructions — even subtle ones — lead to inconsistent behavior.

When different parts of a prompt compete, agents may follow different reasoning paths across runs.

Lesson:

Ensure logical consistency in every instruction.

4. Break Complex Tasks into Atomic Steps

Large, multi-step prompts rarely execute reliably.

Instead, I decomposed workflows into smaller, independent tasks and chained them together.

This improved:

- Predictability

- Debuggability

- Performance

Lesson:

One task at a time leads to better outcomes.

Final Thoughts

Building production-grade AI agents is not just about choosing a powerful model.

It requires:

- Thoughtful system design

- Careful prompt engineering

- Context governance

- Continuous experimentation

Through this MVP, I learned that:

- Simplicity scales better than complexity

- Context is a critical resource

- Prompt design is an engineering discipline

- LLMs can be powerful collaborators when used intentionally

Perhaps the most valuable insight was realizing that modern AI systems are not “plug-and-play.” They require the same rigor, testing, and architectural thinking as any other production system.

If you are building agent-based workflows in real-world environments, I hope these lessons help you navigate some of the challenges I encountered.

The Hidden Engineering Behind Successful AI Agents was originally published in Google Cloud – Community on Medium, where people are continuing the conversation by highlighting and responding to this story.

Source Credit: https://medium.com/google-cloud/the-hidden-engineering-behind-successful-ai-agents-f746f44b4c69?source=rss—-e52cf94d98af—4